Researchers often face a critical modeling decision when the dependent variable has only two outcomes. If the outcome represents categories such as Yes/No, Pass/Fail, Default/No Default, or Survived/Died, linear regression will produce biased and invalid predictions. In such cases, binary logistic regression provides the correct statistical framework.

This article explains what binary logistic regression is, how the binary logistic regression model works, the binary logistic regression formula, assumptions, and how to run and interpret binary logistic regression in SPSS. You will also see SPSS syntax and common interpretation mistakes that affect dissertations and journal submissions.

What Is Binary Logistic Regression?

Binary logistic regression predicts the probability of a binary outcome using one or more independent variables. The dependent variable must contain exactly two categories coded as 0 and 1.

Unlike linear regression, logistic regression does not predict raw values. It predicts probabilities between 0 and 1 using a logistic (S-shaped) curve.

If you previously worked with linear models such as Simple Linear Regression in SPSS or Multiple Linear Regression SPSS, remember that those techniques assume a continuous dependent variable. Binary logistic regression handles categorical outcomes correctly.

Binary Logistic Regression Model

The binary logistic regression model estimates the log-odds of an event occurring.

Instead of modeling Y directly, the model estimates:

log(p / 1 − p)

Where:

- p = probability of the event

- p / (1 − p) = odds

- log(p / 1 − p) = logit (log-odds)

The full model becomes:

log(p / 1 − p) = β0 + β1X1 + β2X2 + … + βkXk

Each coefficient represents the change in log-odds associated with a one-unit increase in the predictor. When you exponentiate the coefficient, you obtain Exp(B), also known as the odds ratio.

For outcomes with more than two categories, the correct extension involves Multinomial Logistic Regression SPSS rather than the binary model.

Binary Logistic Regression Formula

The probability formula derived from the logit model equals:

p = 1 / (1 + e^-(β0 + β1X1 + β2X2 + … + βkXk))

This transformation ensures predicted values remain between 0 and 1.

Linear regression can generate impossible probabilities such as −0.3 or 1.4. Logistic regression constrains predictions within meaningful probability limits. That mathematical property makes the binary logistic regression analysis statistically appropriate for dichotomous outcomes.

Binary Logistic Regression Assumptions

Binary logistic regression does not require normality or homoscedasticity. However, several assumptions still apply.

1. Binary Dependent Variable

The outcome must contain exactly two categories.

2. Independent Observations

Each case must represent an independent unit.

3. No Severe Multicollinearity

Independent variables should not correlate excessively. Preliminary checks often involve correlation procedures such as Pearson Correlation SPSS.

4. Linearity of the Logit

Continuous predictors must show a linear relationship with the log-odds of the dependent variable.

5. Adequate Sample Size

Researchers commonly use the 10-events-per-variable guideline to ensure stable estimates.

Notice that logistic regression does not require a normality test, which differentiates it from many parametric procedures.

Binary Logistic Regression vs Logistic Regression

The term “logistic regression” refers to a broader family of models.

Binary logistic regression handles two outcome categories.

Multinomial logistic regression handles more than two unordered categories.

Ordinal logistic regression handles ordered categories.

Binary logistic regression represents one specific form within this family. The correct model depends entirely on the structure of the dependent variable.

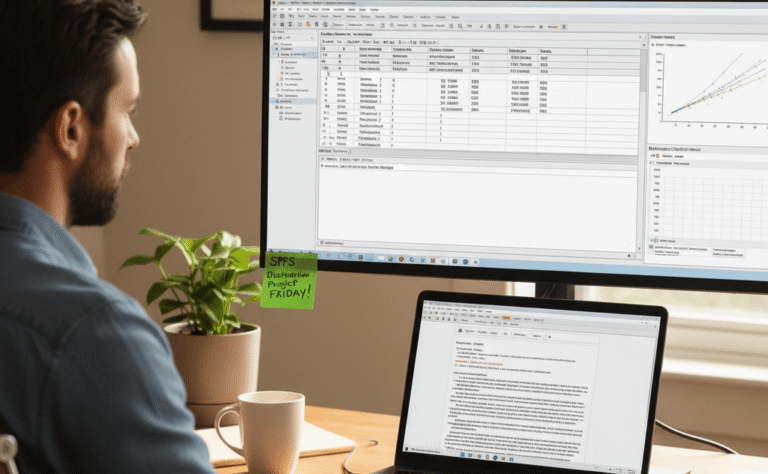

How to Run a Binary Logistic Regression in SPSS

Follow these steps:

- Click Analyze

- Select Regression

- Choose Binary Logistic

- Move the dependent variable into the Dependent box

- Move predictors into the Independent(s) box

- Click Options and select:

- Hosmer-Lemeshow goodness-of-fit

- Confidence Interval for Exp(B) = 95%

- Click OK

SPSS produces output tables including:

- Omnibus Tests of Model Coefficients

- Model Summary

- Hosmer and Lemeshow Test

- Variables in the Equation

The procedure parallels other regression frameworks such as those outlined in How to Run a Linear Regression in SPSS, although the estimation method differs.

How to Interpret Binary Logistic Regression Results in SPSS

Interpretation focuses on four core areas.

Omnibus Test

A significant p-value (p < .05) indicates that the model predicts the outcome better than a null model.

Model Summary

Nagelkerke R² provides a pseudo-R² measure that indicates explained variance.

Hosmer-Lemeshow Test

A non-significant result (p > .05) indicates good model fit.

Variables in the Equation

Focus on:

- B (log-odds coefficient)

- Sig. (p-value)

- Exp(B) (odds ratio)

If Exp(B) = 1.50, a one-unit increase in the predictor increases the odds of the outcome by 50%.

If Exp(B) = 0.70, the predictor decreases the odds by 30%.

Researchers must interpret odds ratios carefully. Odds differ from probabilities. Misinterpreting Exp(B) remains one of the most common dissertation errors.

For complex modeling approaches such as stepwise selection, the framework expands into procedures such as Stepwise Logistic Regression SPSS.

SPSS Syntax for Binary Logistic Regression

SPSS syntax ensures reproducibility and transparent reporting.

Example:

LOGISTIC REGRESSION VARIABLES pass

/METHOD=ENTER study_hours attendance motivation

/PRINT=CI(95) GOODFIT

/CRITERIA=PIN(.05) POUT(.10) ITERATE(20).

This syntax:

- Enters predictors simultaneously

- Requests confidence intervals

- Produces goodness-of-fit statistics

Researchers who use R often implement similar models using generalized linear modeling procedures such as those described in Logistic Regression in R.

When Should You Use Binary Logistic Regression?

Use binary logistic regression when:

- The dependent variable contains two categories

- You want to estimate event probability

- You want interpretable odds ratios

- You analyze medical, behavioral, financial, or social science data

Binary logistic regression plays a central role in applied research because many real-world outcomes naturally occur in two categories.

Conclusion

Binary logistic regression provides a statistically sound method for modeling dichotomous outcomes. The binary logistic regression model transforms probabilities into log-odds, estimates coefficients using maximum likelihood, and produces interpretable odds ratios.

Correct model specification, assumption checking, and accurate interpretation determine the strength of your findings. When researchers understand the structure of the logit model and interpret Exp(B) correctly, their results become both statistically valid and academically defensible.